Extending Fourier Neural Operators for Modeling Parameterized and Coupled PDEs

Left: 1D capacitively coupled plasma (CCP) — electron density and electric field dynamics. Right: Gray–Scott reaction-diffusion — labyrinthine pattern formation.

Abstract

Parameterized and coupled partial differential equations (PDEs) are central to modeling phenomena in science and engineering, yet neural operator methods that address both aspects remain limited. We extend Fourier neural operators (FNOs) with minimal architectural modifications along two directions. For parameterized dynamics, we propose a hypernetwork-based modulation that conditions the operator on physical parameters. For coupled systems, we conduct a systematic exploration of architectural choices, examining how operator components can be adapted to balance shared structure with cross-variable interactions while retaining the efficiency of standard FNOs. Evaluations on benchmark PDEs, including the one-dimensional capacitively coupled plasma equations and the Gray–Scott system, show that our methods achieve up to 55∼72% lower errors than strong baselines, demonstrating the effectiveness of principled modulation and systematic design exploration.

Method

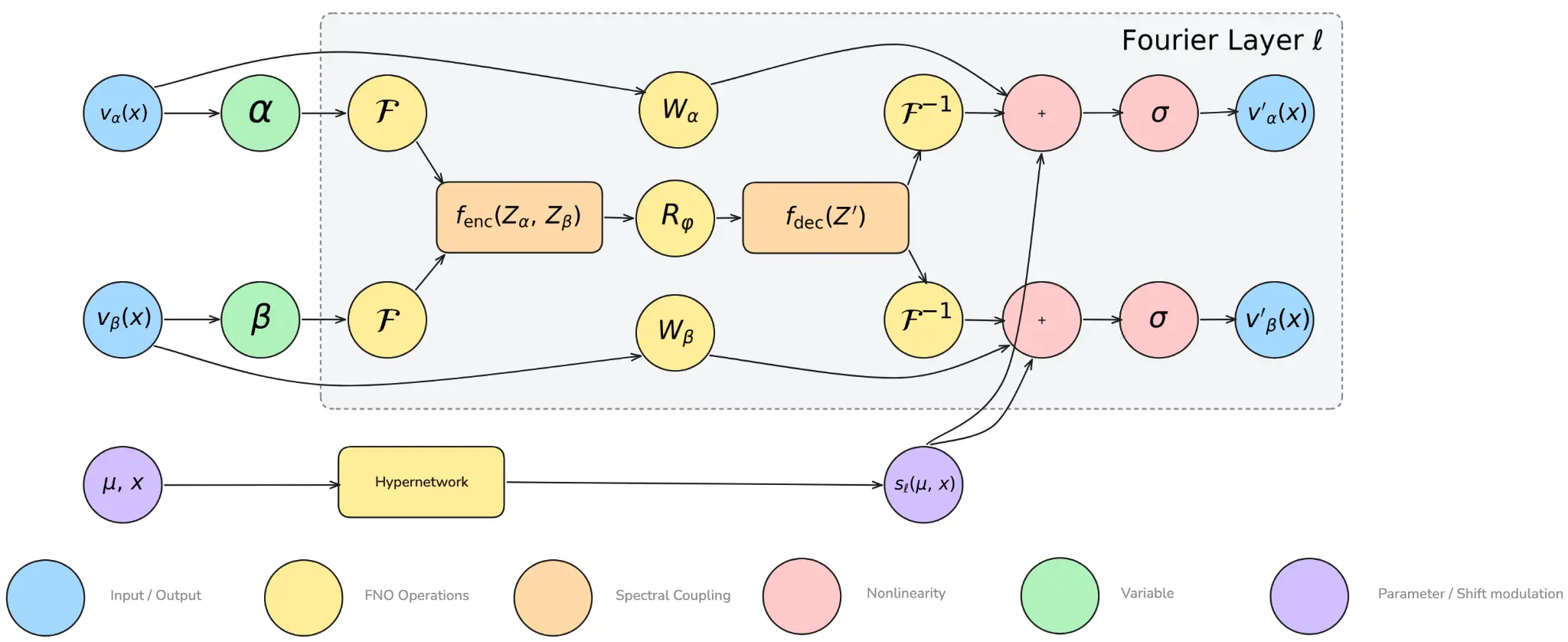

Two principled extensions to the standard Fourier Neural Operator (FNO) architecture.

hpFNO — Hypernetwork-based Parameter Modulation

For parameterized PDEs: conditioning the operator on physical parameters at every Fourier layer.

A compact hypernetwork takes physical parameters and position , producing layer-specific shift terms . Unlike HyperFNO which infers all core weights, hpFNO only infers shift biases — adding parameter-dependent modulation with negligible overhead.

FNOₓ — Spectral Coupling for Multi-variable PDEs

For coupled systems: cross-variable interaction entirely in Fourier space.

Variables and are independently transformed to Fourier space, jointly encoded by into a shared latent, filtered by a single spectral kernel , then decoded by back into per-variable representations. This captures cross-variable interactions while preserving FNO's efficiency.

Combined architecture: two coupled variables (, ) pass through shared spectral coupling () while a HyperNetwork injects parameter-conditioned shifts at every layer.

Results

Evaluated on two benchmark PDEs. Performance measured in normalized RMSE (nRMSE, lower is better). All experiments repeated 5× with different random seeds.

1D Capacitively Coupled Plasma (CCP)

Novel benchmark introduced in this work. Models are tested on three physical parameters independently: reaction rate , driving voltage , and ion mass . Reported as mean ± std.

| Model | |||

|---|---|---|---|

| FNOm | 0.0403 | 0.0791 | 0.0363 |

| FNOc | 0.0375 | 0.0873 | 0.0299 |

| CFNO | 0.0315 | 0.0428 | 0.0333 |

| HyperFNOc | 0.0278 | 0.0355 | 0.0253 |

| MWTc | 0.0409 | 0.0639 | 0.0403 |

| CMWNO | 0.0312 | 0.0526 | 0.0241 |

| DONc | 0.0844 | 0.2147 | 0.1035 |

| U-NETc | 0.1084 | 0.0844 | 0.1719 |

| FNOx | 0.0193 | 0.0345 | 0.0212 |

| pFNOx | 0.0194 | 0.0278 | 0.0142 |

| hpFNOx ★ | 0.0154 | 0.0192 | 0.0128 |

Governing Equations

Parameters: , , . 100 trajectories, 9:1 train/test split.

hpFNOₓ achieves best nRMSE on all three parameters

e.g. : 0.0192 vs. best baseline CFNO: 0.0428 — 55% lower error

Robustness to Input History Length

Performance on the reaction rate case as the number of input time steps decreases. Parameterized variants (hp) remain stable even with minimal history (), while non-parameterized models diverge (†).

| Model | ||||

|---|---|---|---|---|

| FNOc | 0.0375 ±0.0055 | 0.1324 ±0.0304 | 1.0048† | 1.4484† |

| pFNOc | 0.0334 ±0.0101 | 0.0923 ±0.0276 | 0.2151‡ ±0.0374 | 0.3757‡ ±0.0679 |

| hpFNOc | 0.0196 ±0.0021 | 0.0804 ±0.0237 | 0.1515‡ ±0.0070 | 0.1609‡ ±0.0043 |

| FNOx | 0.0193 ±0.0059 | 0.0406 ±0.0093 | 1.2832† | 1.8143† |

| pFNOx | 0.0194 ±0.0075 | 0.0464 ±0.0158 | 0.1640‡ ±0.0155 | 0.2522‡ ±0.1376 |

| hpFNOx ★ | 0.0154 ±0.0029 | 0.0317 ±0.0022 | 0.1324‡ ±0.0242 | 0.1372‡ ±0.0100 |

Efficiency Analysis

hpFNOₓ achieves the best accuracy at comparable model size and training time to standard FNO — a Pareto-optimal solution. Results from the CCP varying driving voltage () scenario. Source: Figure 4, Jing et al., ICLR 2026.

(a) Model Size vs. Accuracy

Our methods cluster at low parameter count with best accuracy

(b) Training Time vs. Accuracy

Our methods achieve best accuracy without sacrificing training speed

Citation

If you find this work useful, please cite:

@inproceedings{jingextending,

title={Extending Fourier Neural Operators for Modeling

Parameterized and Coupled PDEs},

author={Jing, Cheng and Mudiyanselage, Uvini Balasuriya

and Verma, Abhishek and Bera, Kallol

and Rauf, Shahid and Lee, Kookjin},

booktitle={The Fourteenth International Conference

on Learning Representations}

}