Overview

The future of a chaotic system is not a single path — it is a distribution over paths. This lecture addresses the stochasticity of the real world, which is crucial for world models where deterministic prediction is insufficient. We derive score-based diffusion models from the VP-SDE framework, show how denoising score matching provides a tractable training objective, and apply the method to probabilistic forecasting of the Lorenz-96 atmospheric model. The lecture connects to DeepMind's GenCast, which outperforms ECMWF ENS on 97.2% of forecasting targets.

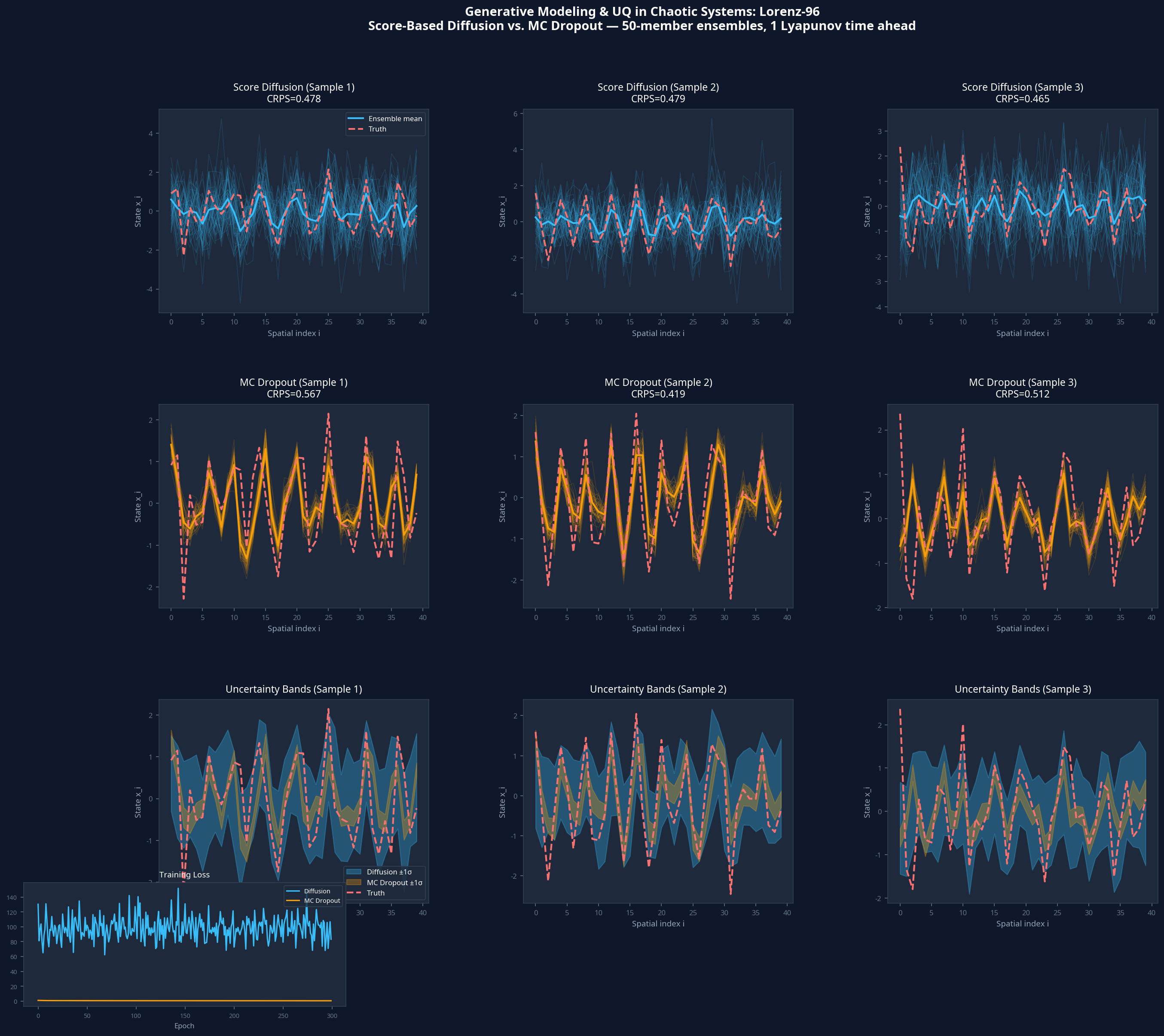

Benchmark & Results

Setup

Lorenz-96 (40-dimensional), probabilistic forecasting

Result

Lower CRPS and better spread-skill than MC Dropout baseline

Lecture Slides

The full slide deck for this lecture is available as a PDF. Each slide includes speaker notes for the presenter.

Code

The annotated implementation for this lecture is in diffusion_uq_lorenz96.py. All code is written in PyTorch and prioritizes clarity over cleverness.

# diffusion_uq_lorenz96.py # See the attached file for the full annotated implementation. # Key classes and functions are documented with docstrings.

References

- [1]Song, Y., Sohl-Dickstein, J., Kingma, D. P., Kumar, A., Ermon, S., & Poole, B. (2021). Score-Based Generative Modeling through Stochastic Differential Equations. NeurIPS 2021. arXiv: 2011.13456

- [2]Ho, J., Jain, A., & Abbeel, P. (2020). Denoising Diffusion Probabilistic Models. NeurIPS 2020. arXiv: 2006.11239

- [3]Price, I., et al. (2025). Probabilistic weather forecasting with machine learning (GenCast). Nature. arXiv: 2312.15796

Cite As

If you use this lecture material in your research or teaching, please cite the primary reference:

@misc{jing2025sciml4,

title = {Lecture 4: Generative Modeling & UQ},

author = {Jing, Cheng},

year = {2025},

note = {An Intro Course to Scientific Machine Learning, Arizona State University},

url = {https://jessecj.me/course/lecture-4-generative-uq},

howpublished = {\url{https://jessecj.me/course/lecture-4-generative-uq}}

}