Overview

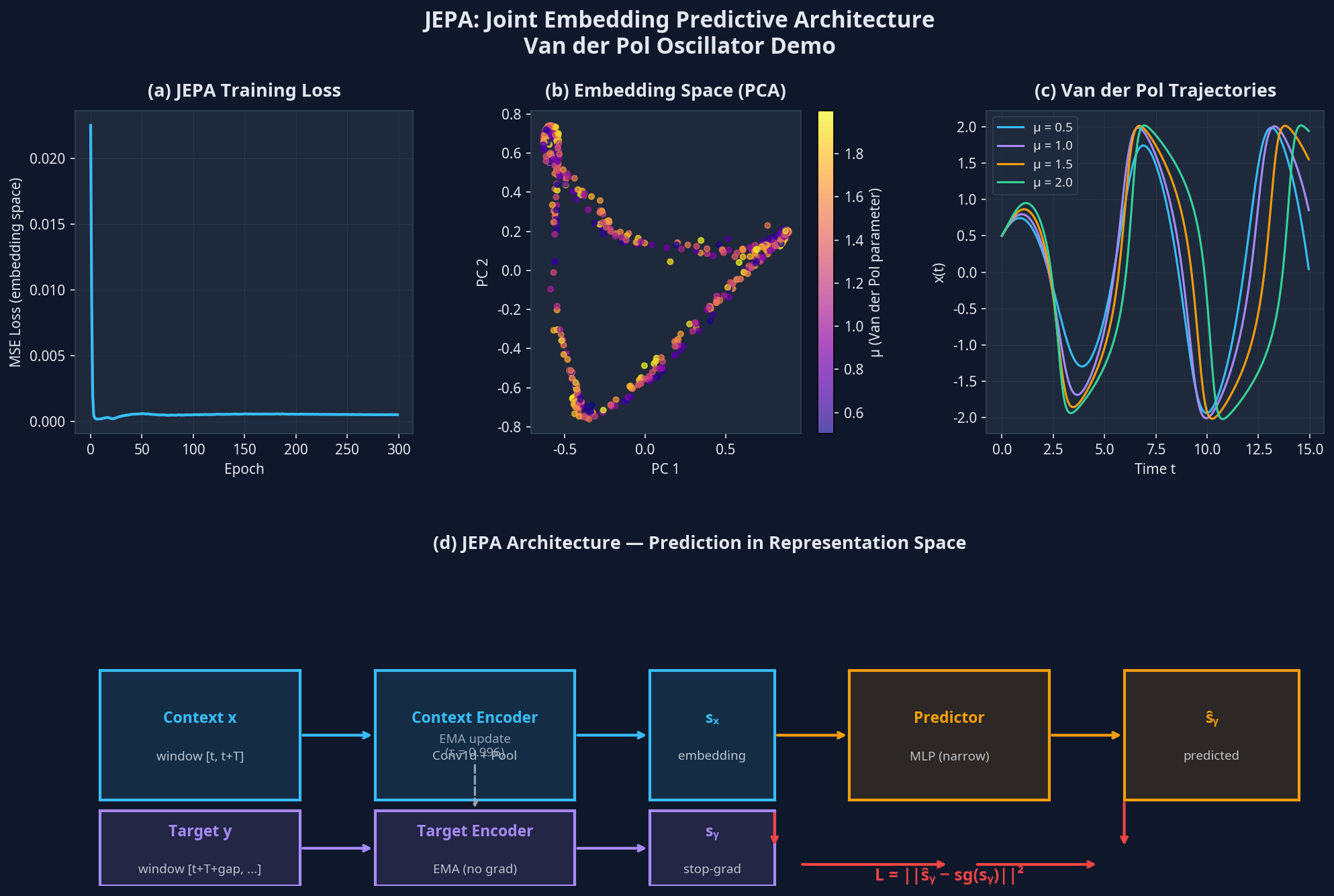

This lecture takes a sharp turn toward the broader question of how intelligent systems model the world. LeCun's Joint Embedding Predictive Architecture (JEPA) argues that prediction should occur in latent space, not pixel space — a principle that resonates deeply with SciML, where we seek compact representations of physical dynamics. The lecture covers the four prediction architectures, the collapse prevention mechanism (EMA target encoder, stop-gradient), and the I-JEPA and V-JEPA instantiations.

Benchmark & Results

Setup

Van der Pol oscillator, latent representation learning

Result

Smooth parameter manifold in PCA embedding space

Lecture Slides

The full slide deck for this lecture is available as a PDF. Each slide includes speaker notes for the presenter.

Code

The annotated implementation for this lecture is in jepa_demo.py. All code is written in PyTorch and prioritizes clarity over cleverness.

# jepa_demo.py # See the attached file for the full annotated implementation. # Key classes and functions are documented with docstrings.

References

- [1]LeCun, Y. (2022). A Path Towards Autonomous Machine Intelligence. OpenReview (position paper).

- [2]Assran, M., et al. (2023). Self-Supervised Learning from Images with a Joint-Embedding Predictive Architecture. CVPR 2023. arXiv: 2301.08243

- [3]Bardes, A., et al. (2024). V-JEPA: Latent Video Prediction for Visual Representation Learning. ICLR 2024.

Cite As

If you use this lecture material in your research or teaching, please cite the primary reference:

@misc{jing2025sciml3,

title = {Lecture 3: World Models & JEPA},

author = {Jing, Cheng},

year = {2025},

note = {An Intro Course to Scientific Machine Learning, Arizona State University},

url = {https://jessecj.me/course/lecture-3-world-models-jepa},

howpublished = {\url{https://jessecj.me/course/lecture-3-world-models-jepa}}

}